Taro Murai, CEO & President of Kudan USA

»Many reasons to use proven third-party SLAM-software«

SLAM-software is a powerful method to provide accurate localization for devices like robots or drones in dynamic environments. There are several open source resources but companies who try to utilize them often fail, says Taro Murai from this years embedded award winner Kudan.

Kudan won the embedded award in the category start-up for one of its API software products: Kudan Visual SLAM (KdVisual). It provides repeatable positional accuracy of less than 1 cm. The software generates a point cloud map of the surroundings of a device. At the same time it can pinpoint its location and orientation on that map at any given time. For that it uses a method called Simultaneous Localization and Mapping (SLAM) with cameras as a primary sensor, known as »Visual SLAM«.

Mr. Murai, can you give us a pitch on Kudan and KdVisual please?

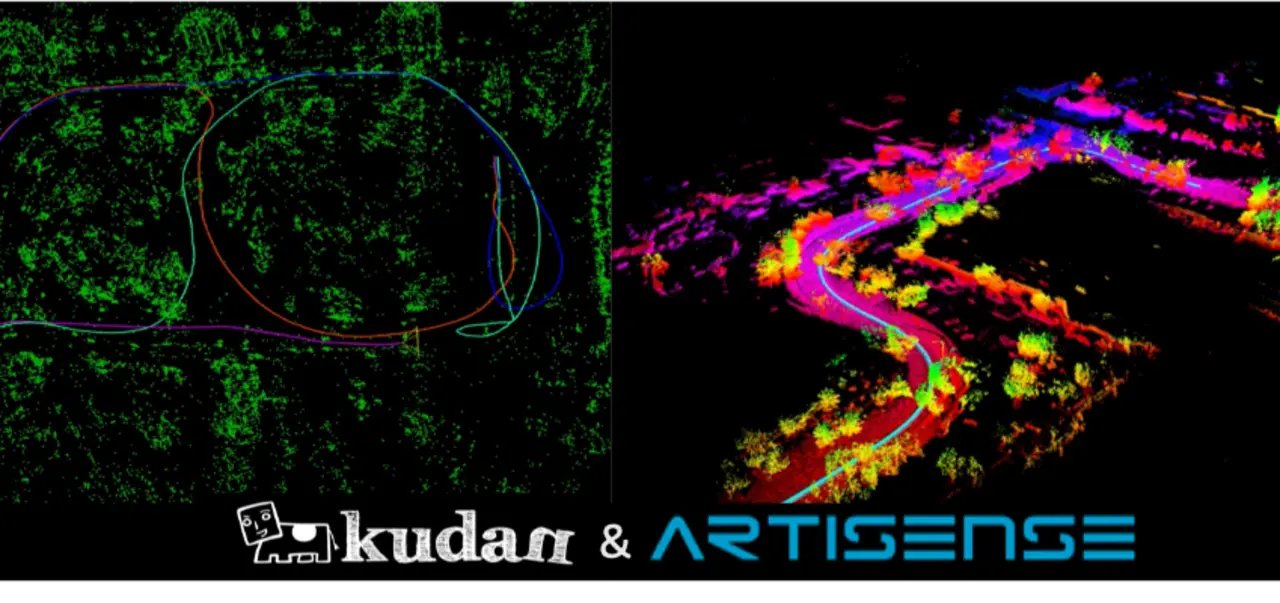

Kudan was founded in Bristol, UK, so R&D for KdVisual SLAM and Lidar SLAM takes place in the UK. We are going through an acquisition of a complementary SLAM software provider, called Artisense, based out of Munich in Germany. With more than 30 SLAM-focused engineers from Kudan and Artisense we are the largest independent SLAM software company in the world. We support 3 different types of SLAM-software: direct visual SLAM through Artisense, indirect visual SLAM and Lidar SLAM through Kudan, which covers most of our customer’s needs and their target platforms.

Visual SLAM tends to be seen as less reliable and accurate compared to 2D-Lidar SLAM due to its complexity of algorithms and difficulty of building it properly. KdVisual overcomes this misperception and has significantly better performance benefits like being fast, accurate and repeatable to meet industrial robotics requirements. It has been built to work under a variety of environments and dynamic sceneries, flexible sensor and processor choices, and it brings ease of integration into new and existing systems and platforms.

How many SLAM-software products are in the market?

Many products use SLAM but the number of commercially available SLAM-software providers is quite limited. There are mainly two reasons for this: Firstly, building an original SLAM-software from scratch requires several years of R&D with a team of computer vision engineers. Even adapting open source SLAM software for commercial purposes requires years of optimization and tuning.

Secondly, M&As have been quite active in this market and many SLAM-software providers were acquired by technology giants in the consumer devices and automotive spaces. At this moment, many companies try open source SLAM-software first and then realize that it fails to meet commercial requirements in terms of accuracy, robustness, memory and CPU usage.

Where do the companies struggle?

Many companies start with open source SLAM-software such as ORB-SLAM. However, there are many hurdles to be overcome that are not easy for a small team. These include robust handling of real-life data, adapting to the requirements of different sensors and processors, fitting within their memory and processing budget, and the limited, or lack of, support during integration. Then companies realize that it will take another year or more even with a large R&D budget before they have a reliable solution of their own.

How do customers integrate your KdVisual?

Our customers will typically choose one of two methods depending on their existing platform and the level of customization required. We can provide our SLAM-software as a library that our customers can use to build a localization stack. We also provide support during integration and development as well as creating any new features that may be required. Alternatively, we can provide our library as a ROS node which can be added to an existing ROS-based system.

In addition, we also serve solution providers with our engineering partners. We provide the KdVisual library to our partner and they then develop hardware with KdVisual inside. The solution provider builds its solution using these hardware modules that provide vision and localization capabilities.

What are your target-applications for KdVisual?

KdVisual’s primary target application is industrial robotics such as autonomous mobile robots and auto guided vehicles. This is followed by ADAS, consumer robotics and AR/VR. We believe the robustness and performance of our SLAM-algorithm gives us an advantage in the highly demanding industrial robotics space, but we provide unmatched value wherever fast and accurate location and spatial awareness is needed.

You already partnered with companies like Analog Devices, Nvidia and Qualcomm. How do you want to expand the ecosystem further?

We have shifted our focus to »deepening« rather than »broadening«. We have been actively expanding our own ecosystem and forming meaningful partnerships with many industry-leading players but now we are putting more effort on further tightening the relationships with our existing partners. We are working on optimizing our SLAM algorithms for specific processing units and sensors so that our joint customers can enable computer vision through SLAM more easily and take advantage of our collaborative solution.

What are the next steps on your roadmap and will you show them on next year’s embedded world?

For KdVisual we are working on features that will significantly increase the robustness against scenery changes and dynamic objects in an efficient processing manner. Longer term we are targeting fusing camera and 3D-Lidar to bring the best of both worlds together in one SLAM system. We hope to be able to show off some of these new features during the next embedded world event.