Developing sustainable AI systems

Study defines criteria

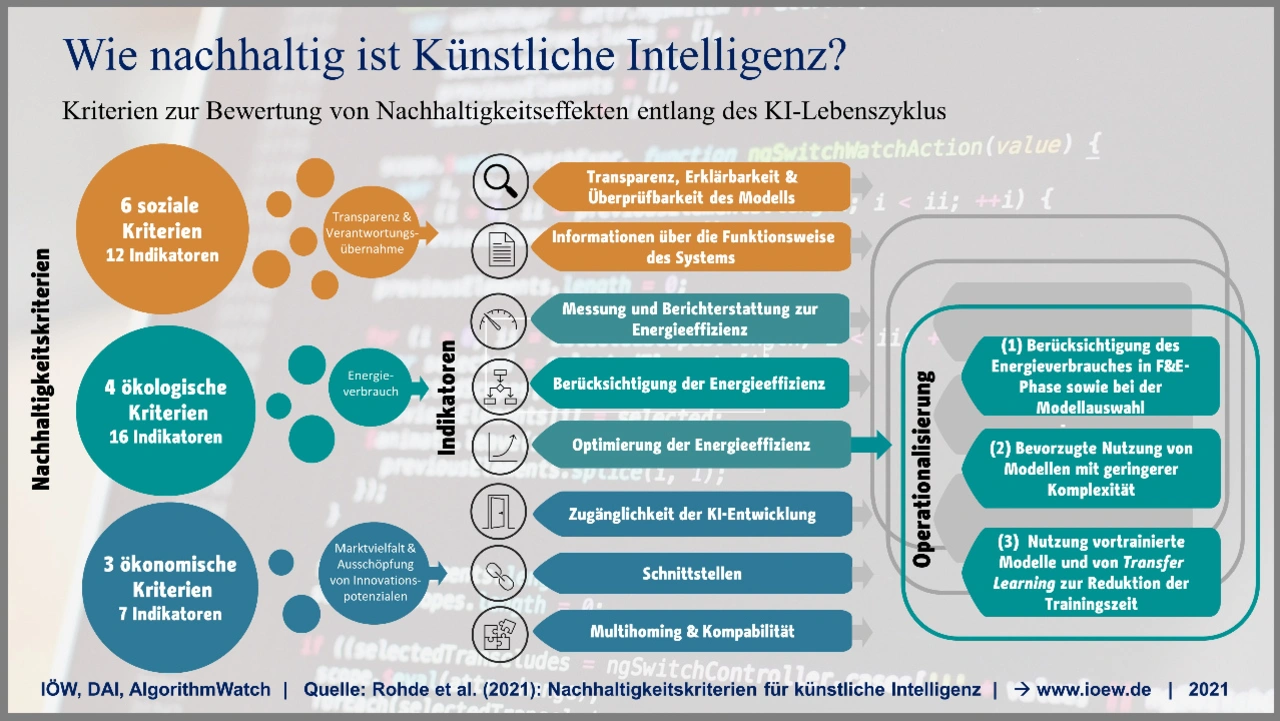

A first study on the sustainability of AI systems describes in more than 50 indicators which aspects have to be considered when using AI. This is important in order to be prepared for upcoming regulations such as the EU's Artificial Intelligence Act.

From speech recognition, personalized news feeds and chat bots to machine-optimized industrial processes: artificial intelligence (AI) and machine learning are pervasive in everyday life and have arrived in industry.

However, many questions arise for developers – regarding the transparency of decision-making processes, discrimination or an increasing energy demand during model development. Can these broad impacts of AI systems be analyzed systematically and comparatively?

For the first time, a research team from AlgorithmWatch, the Institute for Ecological Economy Research (IÖW) and the Distributed Artificial Intelligence Laboratory at the Technical University of Berlin has comprehensively investigated this question. The result is a set of criteria and indicators for sustainable AI.

Evaluate the sustainability of AI systems

The researchers analyzed which sustainability effects occur along the AI lifecycle – from data model and system design, to model development and use, to hardware disposal. More than 50 indicators describe how criteria such as transparency, self-determination, inclusive design and cultural sensitivity, as well as resource consumption, greenhouse gas emissions or distributional impacts in target markets of AI applications, can be captured.

»Currently, there is a lot of discussion under the buzzwords 'AI for Earth' or 'AI for Good' about how AI can be used to contribute to sustainable development,« says study author Friederike Rohde, a sociologist at the IÖW. »However, the sustainability impacts of AI systems themselves are not considered. But this is relevant to create awareness of sustainability risks and to minimize them. With our criteria and indicators, we want to help develop concrete assessment tools that show how AI systems can be made more sustainable.«

Concrete starting points

With regard to political regulatory approaches, it is also important to show developers in detail what the sustainability of AI systems actually entails. Regulatory targets include the European Union's Artificial Intelligence Act, the traffic light coalition's sustainability efforts for data centers, or guidelines such as UNESCO's new AI Ethics Recommendation .

»In our study, we first worked out how the sustainability of AI systems can be assessed. The next step is to develop concrete tools to show in practice how AI systems can become more sustainable,« says Anne Mollen, project manager of AlgorithmWatch. »We explicitly want feedback from the field, which we plan to gather in workshops early next year to further develop and refine the indicators.«