Artificial Intelligence

Research news

The hot topic AI has science and technology in its grip. From sensors for wind turbines to new cultivation methods for sugar beets to explainable AI. We summarize the latest news from AI research for you.

In the »SENSORITHM Rhein-Main« project, researchers at Goethe University Frankfurt are developing various, self-learning sensor systems based on artificial intelligence (AI) methods. In the future, they should make it possible to optimize the operation times of wind turbines in such a way that bat species and certain birds such as the red kite are not endangered. In addition, the wind turbines could shut down when there is increased flight activity. In this way, electricity generation from wind energy can be better reconciled with species protection. As a second pillar, the scientists in the project are developing new sensors and algorithms for the technical monitoring of wind turbines - but the technology can also be used for other industrial plants. The new sensors will be tested on various real test objects.

Supercomputer for Ilmenau

Several projects are being carried out at the TU Ilmenau that transfer human thinking and learning to computer systems. In the future, Ilmenau researchers will benefit from a powerful high-performance computer. The transfer project »thuringian AI« – thurAI for short – is researching the three areas of healthcare and medical technology, production and quality assurance, and smart cities. To be able to carry out the projects, a GPU cluster will soon go into operation at the TU Ilmenau computer center. By processing large amounts of data in parallel, the cluster will enable sophisticated learning processes of modern AI techniques. It will also significantly accelerate the training of AI algorithms. Thus, the computer enables the use of modern deep learning methods and provides the university and its partners with access to high data processing rates.

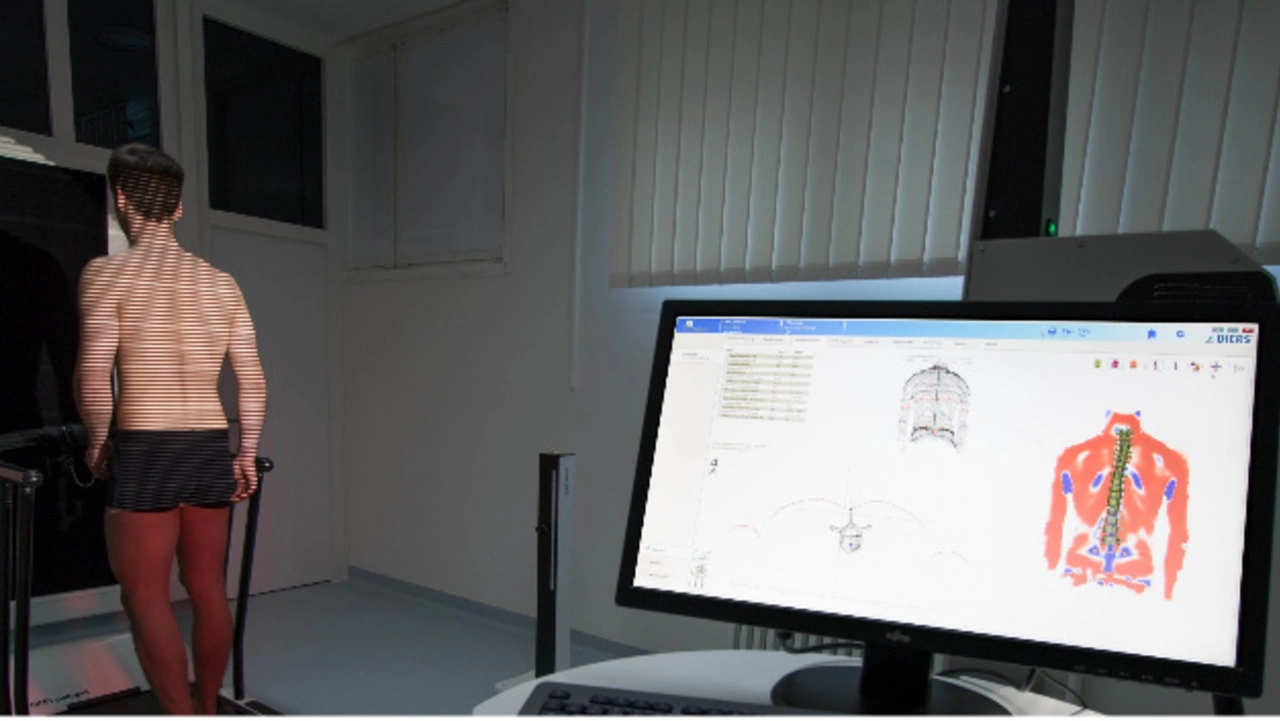

Detecting the cause of back pain

A team of researchers from the Technical University of Kaiserslautern and various partners is working on a method that will allow better observation of back deformities. AI methods are being used here. They help to analyze the spine individually. The procedure is intended to clearly define the causes of the pain. The team relies on a diagnostic technique that is already well proven and widely used in practice. With a projector and a camera unit, the team scans the back, projecting a grid of light. Using so-called raster stereography, an individual model of the spine can be calculated. New to the method is the use of AI and machine learning techniques. In this way, the system is constantly learning with the data it acquires. In the future, this can help medicine to better detect malpositions, for example, and to make personalized diagnoses that enable individualized therapy.

New cultivation methods for sugarbeet

The joint project »zUCKERrübe« aims to enable sustainable and pesticide-free cultivation of field crops in the Uckermark region. Sugar beets serve as an example. In the project, scientists at Eberswalde University of Applied Sciences are investigating field robots, UAS technology and AI processes as well as the interaction of all components. The central task is to develop a new weed control technique to integrate the cultivation of sugar beets into the crop rotation of sustainable agriculture. In addition, it should be possible to transfer the new technique to other plant species. In the development phase of the project, close cooperation with farms and regional processors plays a major role in ensuring practicality. Specifically, the researchers in the project will develop an AI-based image analysis on a low-resource hardware platform. This will make it possible to determine weed infestation and locate the chopping robot. Hardware accelerators are used for this purpose.

Goal: Explainable AI

The German Research Foundation (DFG) is establishing a new Collaborative Research Center on »Explainable AI« at the Universities of Paderborn and Bielefeld. The goal is to improve human-machine interaction, to focus on the understanding of algorithms and to investigate this as a product of a multimodal explanatory process. »Citizens have a right to have algorithmic decisions made transparent. The goal of making algorithms accessible is at the core of so-called eXplainable Artificial Intelligence (XAI), which focuses on transparency, interpretability and explainability as desired outcomes«, says Prof. Dr. Katharina Rohlfing. Especially in the case of predictions in the field of medicine or jurisprudence, she says, it is necessary to be able to comprehend the machine-controlled decision-making.

Mastering AI

Artificial intelligence usually has a black-box character. Special software is available to explain the respective solution path. A study by Fraunhofer IPA compared and evaluated different methods that make machine learning processes explainable.

Users want to understand how a decision is reached, especially in critical applications: Why was the workpiece sorted out as defective? What is causing the wear on my machine? This is the only way to make improvements, which increasingly also affect safety. Experts from Fraunhofer IPA compared nine common explanation methods - such as LIME, SHAP or Layer-Wise Relevance Propagation - and evaluated them with the help of exemplary applications. Three criteria were of particular importance:

- Stability: The program should always provide the same explanation for the same task.

- Consistency: At the same time, only slightly different input data should also receive similar explanations.

- Fidelity: It is particularly important that explanations reflect the behavior of the AI model.

Conclusion of the study: All the explanation methods investigated proved to be useful. However, there is no one perfect method. There are major differences, for example, in the runtime that a method requires. The selection of the best software also depends to a large extent on the task at hand.